Introduction to Elasticsearch and the ELK Stack, Part 2

We finish up this two-part series on Elasticsearch and its role in the ELK stack by examining its architecture.

Join the DZone community and get the full member experience.

Join For FreeWelcome back! If you missed Part 1, you can check it out here: Introduction to Elasticsearch and the ELK Stack, Part 1.

The Architecture of Elasticsearch:

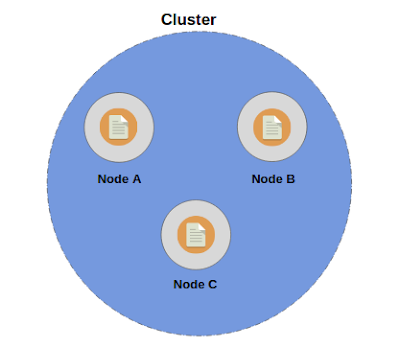

To start things off, we would be discussing Nodes and Clusters which are at the heart of the architecture of Elasticsearch.

Nodes and Clusters

A node is a server that stores data. A cluster is simply a collection of nodes i.e, servers. Each node or server contains a part of the cluster's data, the data we add to the cluster. Simply put, the collection of nodes contains the entire data set for the cluster.

Each node inside the cluster actually participates in the searching and indexing capabilities of the cluster. Hence, a node contains a part of the cluster's data, a node supports indexing new data and also modifying existing data. Each and every single node within a cluster is capable of handling the HTTP requests for clients that may want to insert/modify data through a REST API exposed by the cluster. A particular node is always responsible for receiving the HTTP request and then coordinating the rest of the process.

It is worth mentioning that each mode inside a cluster knows about every other node within the same cluster, and hence is able to forward the request to another node within the cluster using the Transport Layer. Also, each and every node is capable of becoming the master node. The master node is always responsible for coordinating the changes to the cluster, where changes can be action such as adding or removing nodes, creating or removing indices, etc. Hence, the master node basically updates the state of the cluster and this is the only node (i.e., the master node) which is authorized to do so.

Now, how are these nodes and clusters being uniquely identified? The answer is unique names for both nodes and clusters. The default name of a cluster is elasticsearch (all in lower case) and the default name for nodes is Universally Unique Identifiers (UUID). Obviously, as per their requirements, a developer can modify or alter this default behavior. Also, by default, when you start with some nodes, they will automatically join the cluster named elasticsearch, and, if at that moment there is no cluster with the name elasticsearch, then a Cluster with the name "elasticsearch" will be automatically formed. As a matter of fact, a cluster with only one node is a perfectly valid use case.

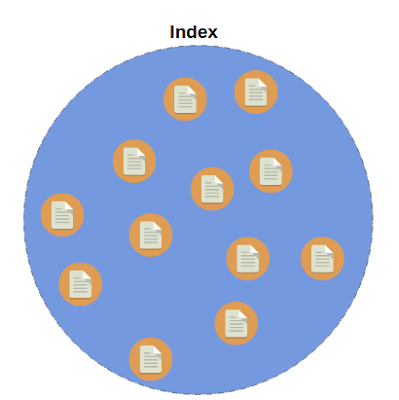

Indices and Documents

Each data item that you may want to store over Elasticsearch nodes is called as a document. A document is the smallest unit which can be indexed. As a matter of fact, documents are simply JSON objects and are analogous to rows in a relational database, like MySQL.

Now, each document can have some properties just like columns in a relational database. The question is, where are these documents stored? As you already know, our data is stored across the nodes within the cluster, and the documents are organized within indices. Hence, an index is simply a collection of logically related documents and analogous to a table in a relational database. As there is no restriction on the number of rows in a table, you can add any number of documents in an index. Each and every document is uniquely identified by an ID, which is either assigned by Elasticsearch automatically or by the developer when adding those documents to the index. Hence, each and every document can be uniquely identified by its index and its ID.

Similar to nodes and clusters, indices are also uniquely identified by their names (again, all in lowercase letters). These names of indices are then used when searching for documents, in which case the developer would specify the index (name) to search for matching documents. The same applies for adding, removing, and updating documents, i.e., a developer would specify the name of the index.

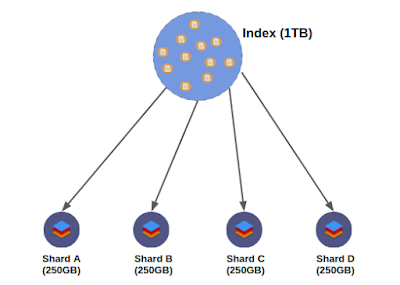

Sharding

Elasticsearch is superbly scalable, with all the credit for this going to its distributed architecture. It is made possible by sharding. Now, before moving further into it, let us consider a simple and very common use case. Let us suppose we have an index which contains a lot of documents, and, for the sake of simplicity, consider that the size of that index is 1 TB (i.e., the sum of the size of each and every document in that index is 1 TB). Also assume that you have two nodes, each with 512 GB of space available for storing data. As can be clearly seen, our entire index cannot be stored in any of the two nodes available and hence we need to distribute our index among these nodes.

In cases like this, where the size of an index exceeds the hardware limits of a single node, sharding comes to the rescue. Sharding solves this problem by dividing the indices into smaller pieces and these pieces are called shards.

As can be seen, a shard contains a subset of the index's data. When an index is sharded, a given document within that index will only be stored within one of the shards. The amazing thing about shards is that they can be hosted on any node within the cluster. Now, coming to our example, we can easily divide the 1 TB index into four shards, where each shard is 256 GB in size. Now, these four shards can then be easily distributed across the two nodes available to us. Hence, we have successfully managed to store our 1 TB index in our 2 nodes, each with the capacity to hold 512 GB, by harnessing the power of sharding. Hence, even with increasing volumes of data, one can tweak the number of shards to manage that and, in this sense, sharding provides scalability.

Along with the scalability it provides, there is one more major advantage of using sharding. The operations (like querying the data) can easily be distributed among multiple nodes and hence we can parallelize our operations which would certainly enhance the performance as now multiple machines can work on the same query at the same time. Now, the question is, when and how do you specify the number of shards an index has? You can (optionally) specify this at the time of index creation, but if you don't, by default, it would be set to 5.

Technically, 5 shards per index would suffice for even large volumes of data. But what if you really started dealing with even larger volumes of data and needed to increase the number of shards in your indices? The answer is, you can't. You cannot change the number of shards an index has after the creation of that index. So, if you canot change the number of shards than how would you handle the situation? The solution is to just create a new index with the number of shards you want and move your data over to the newly created index.

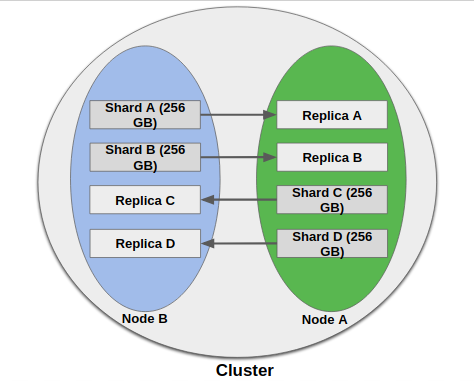

Replication

Even in today's world, hardware failure or some kind of similar failure is inevitable. So, our system must be prepared to face this by having some means of fault tolerance. That's where replication comes to the rescue. Elasticsearch natively supports replication, meaning that shards are copied. These copied shards are referred to as replica shards or just replicas. The "original" shards that have been copied are called as primary shards.

As a matter of fact, the primary shard and its replicas are referred to as a replication group. These terminologies may seem daunting, and, if you are a developer, chances are that you won't have to deal with replicas ever in your life. But, it is always better to be aware of what is going behind the scenes.

There are two major benefits we get from replication:

- Fault Tolerance and High Availability: To make replication even more effective, replicas are never allocated to the same node as their respective primary shards. Hence, even if the entire node fails, we would be having at least one replica of each and every shard (or primary shards in case of failed ones is a replica) that were present in the failed node.

- Enhanced Performance: Replication increases the search performance because, now, searches can be executed on all replicas in parallel, meaning that replicas actually add to the search capabilities of the cluster.

Now, as a matter of fact, the number of replicas is defined at the time of the creation of the index (similar to shards). By default, each shard would have one replica. Hence, at the time of creation, if we don't specify the number of shards per index and the number of replicas per shard, a cluster consisting of more than one node would have five shards per index and each shard would have one replica, totaling 10 shards (five primary shards and five replicas) per index. The reason why replication requires at least two nodes is that we never store a replica shard on the same node as its primary shard.

Keeping Replicas Synchronized

Now suppose a shard is replicated five times. How are those replicas updated whenever data gets changed or removed? Clearly, there is a need to keep those replicas in sync with each other. How does Elasticseatch manage to do this? Actually, Elasticsearch keeps those replicas in sync by using a model named primary-backup.

In this model, the primary shard of every replication group acts as the entry point for each of the operations that affects indexes, such as adding/updating/removing documents. It means all such operations are sent to the primary shard. The primary shard is then responsible for performing some validations on incoming operations. like structural validity of the request. Only if the operation has been accepted by theprimary shard can that operation actually be performed locally on the primary shard. Once the operation on primary shard is complete, the operation is then forwarded to the other replicas within the replication group (the operation is executed in parallel to all of the replicas of that primary shard).

When the operation is executed successfully on each of the replicas, the primary shard is informed and hence the primary shard finally responds to the client about the successful execution of the operation.

How a Search Query Is Executed (Quick Overview):

Simply put, when a client sends a search query cluster, it hits a node. This node, which has received the request from the client, is called a coordinating Node, which means that now this node is responsible for sending out the query to other nodes, assembling the results, and responding to the client.

Now, as the coordinating node itself contains the shard upon which the search query is to be performed, it first performs the search query first on itself, then sends out the query to every other shard or replica in the index.

End Notes

This post briefly discussed how Elasticsearch knows on which shard to store a new document, and how it will find it when retrieving it by its ID. However, as a developer, you most probably won't be dealing with this, but knowing about this a bit is not bad at all. And also, documents should be distributed evenly between nodes by default, so that we won’t have one shard containing way more documents than another (and this is amazingly done out-of-the-box by Elasticsearch). So, determining which shard a given document should be stored in or has been stored is, is called routing. By default, the “routing” value will equal a given document’s ID. This value is then passed through a hash function and hence the exact shard is determined.

Going into the details of the routing would not help us in learning Elasticsearch and hence we would not be digging it further.

If you have any questions, write them down in the comments so that we can discuss them further. Thanks!

Published at DZone with permission of Ayush Jain. See the original article here.

Opinions expressed by DZone contributors are their own.

Trending

-

Superior Stream Processing: Apache Flink's Impact on Data Lakehouse Architecture

-

Introduction to API Gateway in Microservices Architecture

-

Manifold vs. Lombok: Enhancing Java With Property Support

-

Revolutionizing System Testing With AI and ML

Comments